Music Suite

Music Suite is a language for describing music, based on Haskell.

It allow representation and manipulation of music in a very general sense, that is compatible with standard notation and supporting a variety of import and export formats. The use of Haskell allow for music to be created, transformed or analyzed using the full expressive power of the Haskell language.

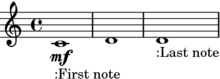

An example

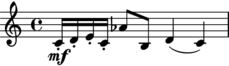

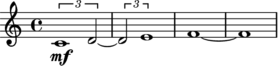

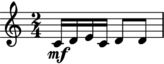

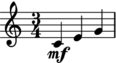

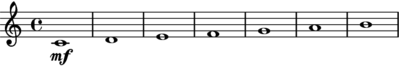

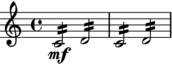

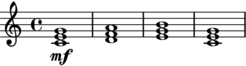

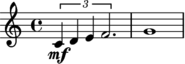

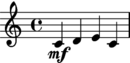

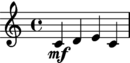

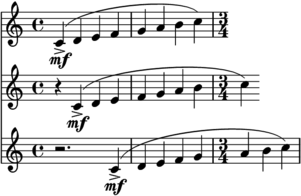

To generate music we write an expressions such as this one:

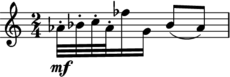

To transform music, we write a function. For example the following function halves all durations and transposes all pitches up a minor sixth:

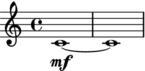

up m6 . compress 2Applied to the above music we get:

Input and output

The Music Suite works well with the following input and output formats.

- MusicXML

- Lilypond

- ABC notation

- MIDI

Other formats are being added in the near future, see Import and export for a more detailed overview.

Version numbers and stability

The Music Suite consists of a group of packages released concurrently under a common version number. The music-suite acts as a meta-package that includes all stable packages of the Suite.

Please note that the Suite is quite usable, parts of it are still experimental, and we expect the API to change slightly with every release up to v2.0.0 (think of it as optimistic versioning). If you have any problems with upgrading from a previous version, please post to the discussion group.

Contributing to the Suite

If you are interested in contributing to the Suite, please join the Github organization (see the link below). In addition to code, we appreciate contributions in the form of tutorials, examples or musical compositions. Hopefully we may soon have a showcase of works created with Music Suite, like the Diagrams gallery.

More information

All releases on Hackage

For more examples, see music-preludes/examples directory. You can download this directory from Hackage using

cabal unpack music-preludes.For bug reports, please use the relevant Github tracker, i.e. for

music-scoreuse https://github.com/music-suite/music-score/issuesFor questions, feedback and general discussion, see the Google discussion group

First steps

Installing the Suite

The Music Suite depends on the Haskell platform.

While not strictly required, Lilypond is highly recommended as it allow you to preview musical scores. See Import and Export for other formats.

To install the suite, simply install the Haskell platform, and then run:

cabal install music-suiteUsing Music files

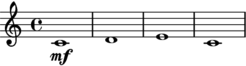

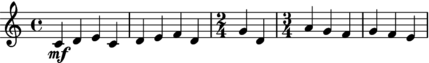

A piece of music is described by a expressions such as this one:

c |> d |> eThe simplest way to render this expression is to save it in a file named foo.music (or similar) and convert it using music2pdf foo.music. This should render a file called foo.pdf.

There are several programs for converting music files:

music2midi– converts to MIDImusic2musicxml– converts to MusicXMLmusic2ly– converts to Lilypond input filesmusic2pdf– converts to PDF (using Lilypond)music2png– converts to PNG (using Lilypond)

Using Haskell files

Alternatively, you can create a file called test.hs (or similar) with the following structure:

import Music.Prelude

example = c |> d |> e

main = open exampleThen either execute it using:

$ runhaskell test.hsor compile and run it with

$ ghc --make test

$ ./testIn this case the resulting program will generate and open a file called test.pdf containing the output seen above.

Music files and Haskell files using open are equivalent in every aspect. In fact, the music2... programs are simple utilities that substitutes a single expression into a Haskell module such as the one above and executes the resulting main function.

Interactive use

An advantage of Haskell files is that you can load them into a Haskell interpreter (i.e. GHCI).

TODO configuration

In the interpreter, the display and audify functions are the most convenient ways of inspecting music. Note that because these functions are overloaded you may be required to provide a specific type signature.

>>> display $ ([c,g,bb]^.chord :: Chord Pitch)

>>> audify $ (c :: Pitch)

>>> display $ ([c,g,bb]^.chord :: Chord Pitch)

>>> audify $ [1,2,1]^.rhythmWriting music

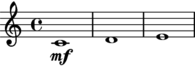

Time and Duration

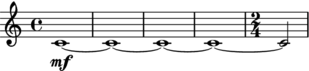

A single note can be entered by its name. This will render a note in the middle octave with a duration of one. Note that note values and durations correspond exactly, a duration of 1 is a whole note, a duration of 1/2 is a half note, and so on.

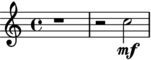

To change the duration of a note, use stretch or compress. Note that:

compress x = stretch (1/x)for all values of x.

TODO delay

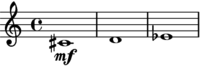

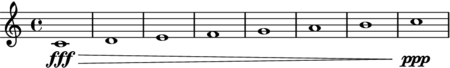

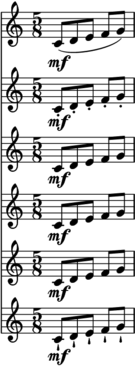

Offset and duration is not limited to simple numbers. Here are some more complex examples:

As you can see, note values, tuplets and ties are added automatically

The |* and |/ operators can be used as shorthands for delay and compress.

Allthough the actual types are more general, you can think of c as an expression of type Score Note, and the transformations as functions Score Note -> Score Note.

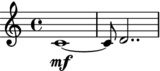

Composition

Music expressions can be composed <>:

TODO fundamentally, <> is the only way to compose music...

Or in sequence using |>:

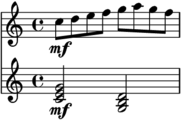

Or partwise using </>:

Here is a more complex example:

let

scale = scat [c,d,e,f,g,a,g,f]|/8

triad a = a <> up _M3 a <> up _P5 a

in up _P8 scale </> (triad c)|/2 |> (triad g_)|/2As a shorthand for x |> y |> z .., we can write scat [x, y, z] (short for sequential concatenation).

For x <> y <> z .., we can write pcat [x, y, z] (short for parallel concatenation).

Actually, scat and pcat used to be called melody and chord back in the days, but I figured out that these are names that you actually want to use in your own code.

Pitch

Pitch names

To facilitate the use of non-standard pitch, the standard pitch names are provided as overloaded values, referred to as pitch literals.

To understand how this works, think about the type of numeric literal. The values 0, 1, 2 etc. have type Num a => a, similarly, the pitch literals c, d, e, f... have type IsPitch a => a.

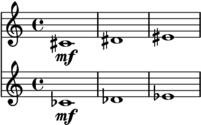

For Western-style pitch types, the standard pitch names can be used:

Pitch names in other languages work as well, for example ut, do, re, mi, fa, so, la, ti, si.

You can change octave using octavesUp and octavesDown:

There is also a shorthand for other octaves:

Sharps and flats can be added by the functions sharp and flat, which are written postfix thanks to some overloading magic.

You can also use the ordinary (prefix) versions sharpen and flatten.

As you might expect, there is also a shorthand for sharp and flat notes:

Here is an overview of all pitch notations:

sharpen c == c sharp == cs

flatten d == d flat == db

(sharpen . sharpen) c == c doubleSharp == css

(flatten . flatten) d == d doubleFlat == dssNote that cs == db may or may not hold depending on which pitch representation you use.

Interval names

Interval names are overloaded in a manner similar to pitches, and are consequently referred to as interval literals. The corresponding class is called IsInterval.

Here and elsewhere in the Music Suite, the convention is to follow standard theoretical notation, so minor and diminished intervals are written in lower-case, while major and perfect intervals are written in upper-case. Unfortunately, Haskell does not support overloaded upper-case values, so we have to adopt an underscore prefix:

minor third == m3

major third == _M3

perfect fifth == _P5

diminished fifth == d5

minor ninth == m9Similar to sharpen and flatten, the augment and diminish functions can be used to alter the size of an interval. For example:

let

intervals = [diminish _P5, (diminish . diminish) _P5]

in scat $ fmap (`up` c) intervalsYou can add pitches and intervals using the .-. and .+^ operators. To memorize these operators, think of pitches and points . and intervals as vectors ^.

Qualified pitch and interval names

There is nothing special about the pitch and interval literals, they are simply values exported by the Music.Pitch.Literal module. While this module is reexported by the standard music preludes, you can also import it qualified if you want to avoid bringing the single-letter pitch names into scope.

Pitch.c |> Pitch.d .+^ Interval.m3TODO overloading, explain why the following works:

return (c::Note) == (c::Score Note)Dynamics

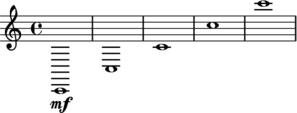

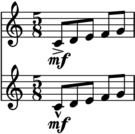

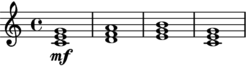

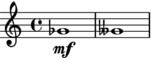

Dynamic values are overloaded in the same way as pitches. The dynamic literals are defined in Music.Dynamics.Literal and have type IsDynamics a => a.

An overview of the dynamic values:

TODO other ways of applying level

Articulation

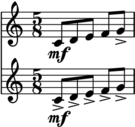

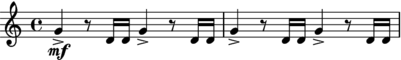

Some basic articulation functions are legato, staccato, portato, tenuto, separated, staccatissimo:

legato (scat [c..g]|/8)

</>

staccato (scat [c..g]|/8)

</>

portato (scat [c..g]|/8)

</>

tenuto (scat [c..g]|/8)

</>

separated (scat [c..g]|/8)

</>

staccatissimo (scat [c..g]|/8)Applying articulations over multiple parts:

let

p1 = scat [c..c']|/4

p2 = delay (1/4) $ scat [c..c']|/4

p3 = delay (3/4) $ scat [c..c']|/4

in (accent . legato) (p1 </> p2 </> p3)Parts

Space

TODO

Tremolo

TODO chord tremolo

Slides and glissando

Harmonics

Use the harmonic function:

TODO artificial harmonics

Text

TODO

Chords

Note with the same onset and offset are rendered as chords by default. If you want to prevent this you must put them in separate parts.

Or, equivalently:

TODO how part separation works w.r.t. division etc

Rests

Similar to chords, there is usually no need to handle rests explicitly.

TODO add explicit rests etc

Musical aspects

Pitch

number quality name accidental number invert simple octaves asPitch asAccidental

Articulation

Dynamics

Parts

Space

Time and structure

Time, duration and span

Time points and vectors are represented by two types Time and Duration. The difference between these types is similar to the distinction between points and vectors in ordinary geometry. One way of thinking about time vs. duration is that duration are always relative (i.e. the duration between the start of two notes), while time is absolute.

Time points form an affine space over durations, so we can use the operators .+^ and .-. to convert between the two.

The Span type represents a slice of time. We can represent spans in exactly three ways: as two points representing onset and offset, as one point representing onset and a duration, or alternatively as a point representing offset and a duration. To convert between these representations, we can use onsetAndOffset, onsetAndDuration and durationAndOffset, which are isomorphisms using the definition from the lens package.

Rests, Notes and Chords

Voices

A Voice represents a single voice of music. It consists of a sequence of values with duration.

It can be converted into a score by stretching each element and composing in sequence.

Segment and Linear

Behavior and Reactive

Tracks

A Track is similar to a score, except that it events have no offset or duration. It is useful for representing point-wise occurrences such as samples, cues or percussion notes.

It can be converted into a score by delaying each element and composing in parallel. An explicit duration has to be provided.

Scores

Meta-information

It is often desirable to annotate music with extraneous information, such as title, creator or, key or time signature. Also, it is often useful to mark scores with structural information such as movement numbers, rehearsal marks or general annotations. In the Music Suite these are grouped together under the common label meta-information.

The notion of meta-data used in the Music Suite is more extensive than just static values: any Transformable container can be wrapped, and the meta-data will be transformed when the annotated value is transformed. This is why meta-data is often variable values, such as Reactive or Behavior.

All time structures in the Suite support an arbitrary number of meta-data fields, indexed by type. All meta-information is required to satisfy the Typeable, so that meta-data can be packed and unpacked dynamically), and Monoid, so that values can be created and composed without having to worry about meta-data. The mempty value is implicitly chosen if no meta-information of the given type has been entered: for example the default title is empty, the default time signature is 4/4. If two values annotated with meta-data are composed, their associated meta-data maps are composed as well, using the <> operator on each of the types.

The distinction between ordinary musical data and meta-data is not always clear cut. As a rule of thumb, meta-events are any kind of event that does not directly affect how the represented music sounds when performed. However they might affect the appearance of the musical notation. For example, a clef is meta-information, while a slur is not. A notable exception to this rule is meta-events affecting tempo such as metronome marks and fermatas, which usually do affect the performance of the music.

Title

Title, subtitle etc is grouped together as a single type Title, thus an arbitrary number of nested titles is supported. The simplest way to add a title is to use the functions title, subtitle, subsubtitle and so son.

Attribution

Similar to titles, the attribution of the creators of music can be annotated according to description such as composer, lyricist, arranger etc. More generally, attribution or attributions can be used to embed arbitrary (profession, name) mappings.

Key signatures

Time signatures

Tempo

Fermatas, caesuras and breathing marks

TODO

Ritardando and accellerando

TODO

Rehearsal marks

TODO

Barlines and repeats

There is generally no need to enter bars explicitly, as this information can be inferred from other meta-information. Generally, the following meta-events (in any part), will force a change of bar:

- Key signature changes

- Time signature changes

- Tempo changes

- Rehearsal marks

However, the user may also enter explicit bar lines using the following functions:

Whenever a bar line is created as a result of a meta-event, an shorted time signature may need to be inserted as in:

compress 4 $ timeSignature (4/4) (scat [c,d,e,c,d,e,f,d,g,d]) |> timeSignature (3/4) (scat [a,g,f,g,f,e])TODO repeats

Clefs

To set the clef for a whole passage, use clef. The clef is used by most notation backends and ignored by audio backends.

To set the clef for a preexisting passage in an existing score, use clefDuring.

TODO example

Annotations

Annotations are simply textual values attached to a specific section of the score. In contrast to other types of meta-information annotations always apply to the whole score, not to a single part. To annotate a score use annotate, to annotate a specific span, use annotateSpan.

Annotations are invisible by default. To show annotations in the generated output, use showAnnotations.

Custom meta-information

Meta-information is not restricted to the types described above. In fact, the user can add meta-information of any type that satisfies the AttributeClass constraint, including user-defined types. Meta-information is required to implement Monoid. The mempty value is used as a default value for the type, while the mappend function is used to combine the default value and all values added by the user.

Typically, you want to use a monoid similar to Maybe, First or Last, but not one derived from the list type. The reason for this is that meta-scores compose, so that getMeta (x <> y) = getMeta x <> getMeta y.

Import and export

The standard distribution (installed as part of music-suite) of the Music Suite includes a variety of input and output formats. There are also some experimental formats, which are distributed in separate packages, these are marked as experimental below.

The conventions for input or output formats is similar to the convention for properties (TODO ref above): for any type a and format T a, input formats are defined by an is constraint, and output format by a has constraint. For example, types that can be exported to Lilypond are defined by the constraint HasLilypond a, while types that can be imported from MIDI are defined by the constraint IsMidi a.

MIDI

All standard representations support MIDI input and output. The MIDI representation uses HCodecs and the real-time support uses hamid.

Beware that MIDI input may contain time and pitch values that yield a non-readable notation, you need an sophisticated piece of analysis software to convert raw MIDI input to quantized input.

Lilypond

All standard representations support Lilypond output. The lilypond package is used for parsing and pretty printing of Lilypond syntax. Lilypond is the recommended way of rendering music notation.

Lilypond input is not available yet but a subset of the Lilypond language will hopefully be added soon.

An example:

toLilypondString $ asScore $ scat [c,d,e]<<

\new Staff { <c'>1 <d'>1 <e'>1 }

>>MusicXML

All standard representations support MusicXML output. The musicxml2 package is used for parsing and pretty printing.

The output is fairly complete, with some limitations (reports welcome). There are no plans to support input in the near future.

Beware of the extreme verboseness of XML, for example:

toMusicXmlString $ asScore $ scat [c,d,e]<?xml version='1.0' ?>

<score-partwise>

<movement-title>Title</movement-title>

<identification>

<creator type="composer">Composer</creator>

</identification>

<part-list>

<score-part id="P1">

<part-name></part-name>

</score-part>

</part-list>

<part id="P1">

<measure number="1">

<attributes>

<key>

<fifths>0</fifths>

<mode>major</mode>

</key>

</attributes>

<attributes>

<divisions>768</divisions>

</attributes>

<direction>

<direction-type>

<metronome>

<beat-unit>quarter</beat-unit>

<per-minute>60</per-minute>

</metronome>

</direction-type>

</direction>

<attributes>

<time symbol="common">

<beats>4</beats>

<beat-type>4</beat-type>

</time>

</attributes>

<note>

<pitch>

<step>C</step>

<alter>0.0</alter>

<octave>4</octave>

</pitch>

<duration>3072</duration>

<voice>1</voice>

<type>whole</type>

</note>

</measure>

<measure number="2">

<note>

<pitch>

<step>D</step>

<alter>0.0</alter>

<octave>4</octave>

</pitch>

<duration>3072</duration>

<voice>1</voice>

<type>whole</type>

</note>

</measure>

<measure number="3">

<note>

<pitch>

<step>E</step>

<alter>0.0</alter>

<octave>4</octave>

</pitch>

<duration>3072</duration>

<voice>1</voice>

<type>whole</type>

</note>

</measure>

</part>

</score-partwise>ABC Notation

ABC notation (for use with abcjs or similar engines) is still experimental.

Guido

Guido output (for use with the GUIDO engine) is not supported yet. This would be useful, as it allow real-time rendering of scores.

Vextab

Vextab output (for use with Vexflow) is not supported yet.

Sibelius

The music-sibelius package provides experimental import of Sibelius scores (as MusicXML import is not supported).

Related work and libraries

The Music Suite is indebted to many other previous libraries and computer music environments, particularly Common Music, PWGL, nyquist, music21, Lilypond and Abjad. Some of the ideas for the quantization algorithms came from Fomus.

The work of Paul Hudak and the the Yale Haskell group, including Haskore, Euterpea is a major influence. The and temporal-media package is a similar take on these ideas. The popular Tidal language provide a way of expressing infinite time structures, similar to the ones defined in music-score.

The idea of defining a custom internal representation, but relying on standardized formats for input and output is influenced by Pandoc. The idea of splitting the library into a set of packages (and the name) comes from the Haskell Suite.

The temporal structures, their instances and more general design philosophy comes from Conal Elliott's Reactive (and its predecessors). Brent Yorgey's Diagrams provided the separation of points and vectors which was a main influence.

Copyright Hans Jacob Höglund 2012–2015

This documentation is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.